Live at https://tinygames.ravigopal.com

The code is open-source under the MIT License on

GitHub.

My daughter is just over two. Every free kids' app on iPad she used was full of ads. She'd tap anywhere — she's a toddler — and land on a banner. The App Store would open. She'd get confused. I'd have to close it, go back, and start over.

I wanted her to have games she could play without interruptions. The browser is already on every device. So I built the games there.

Every game in the project follows these rules:

- One game per page. HTML, CSS, and JS in separate files. Zero external dependencies.

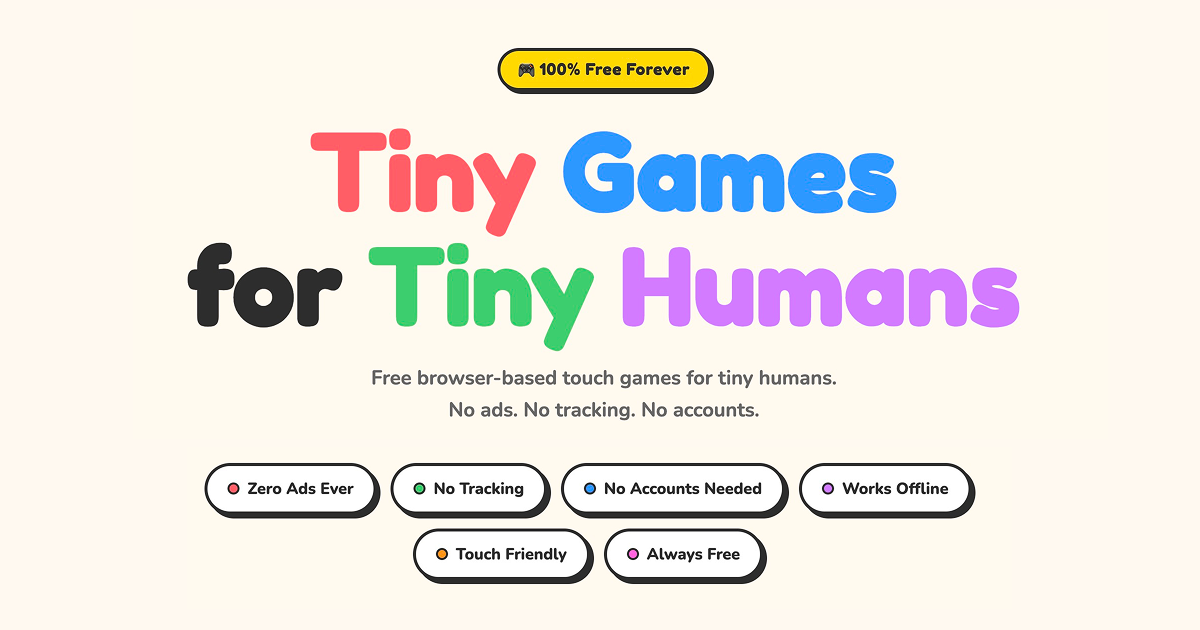

- No ads. No tracking scripts. No accounts. No network requests after first load.

- Touch targets minimum 60px. Built for fingers, not a mouse.

- All sounds generated via the Web Audio API. No audio files.

- No text in the game UI. Everything is visual or audio. She can't read yet.

- Must work on mobile Safari and Chrome with no install.

- Bright, saturated colours chosen for a toddler's developing colour perception.

Tech

Vanilla HTML, CSS, and JavaScript. That's the entire stack. No React, no Vue, no build tool, no bundler, no package manager at runtime.

CSS uses @layer to enforce cascade order across every file: reset → tokens → base → layout → components → animations → utilities. CSS custom properties handle every colour, spacing value, easing curve, z-index, and duration. Changing the visual design of a game means changing token values in one block.

JavaScript uses the Pointer Events API rather than Touch Events. Each finger fires its own pointerdown with a unique pointerId, tracked in a Map. This handles any number of simultaneous touches with the same code path.

Multi-Touch

All games use the Pointer Events API rather than Touch Events. Every finger gets its own pointerdown, pointermove, and pointerup events with a unique pointerId. Active pointers are tracked in a Map keyed by that ID, so each finger has its own state — position, colour, last sound time — independent of every other finger.

This means any number of simultaneous touches just work with no special cases. In Balloon Pop, ten fingers can each pop a different balloon at the same moment. In Paint Splat, the first finger paints in the chosen colour while every additional finger picks a fresh random colour at touch-down and holds it for that stroke.

Two CSS properties are required to make this reliable. touch-action: none on every interactive element tells the browser to hand all touch input straight to JavaScript, preventing it from intercepting gestures for scrolling or zooming. And user-select: none on the body prevents the browser from trying to select text when the user drags their finger across the screen.

Web Audio API

Every sound in every game is synthesised at runtime using the Web Audio API. There are no audio files — nothing to load, nothing to cache, no format compatibility issues.

The AudioContext is never created at page load. Browsers block audio until a user gesture has occurred, so the context is lazy — instantiated on the first pointerdown or keydown. After that, all subsequent sounds play with zero delay.

Each sound type uses a different synthesis approach suited to what it represents,

- Pops and splats: a short noise buffer routed through a bandpass filter, shaped with an exponential gain ramp. Pitch varies slightly with each tap — timed to a major scale sequence — so rapid tapping produces a loose melody rather than a flat repeated thud.

- Level-ups and fanfares: multiple oscillators (sine or triangle wave) at specific frequencies, staggered 90–100ms apart, climbing a scale. The ascending sequence gives an immediate sense of reward without needing any visual prompt.

- Drag and swipe strokes: a very short white-noise buffer through a bandpass filter, throttled to one play per 70ms per active pointer. This gives continuous drawing a physical texture without becoming overwhelming.

- Clear and reset: a sawtooth oscillator sweeping from a high frequency down to a low one. The descending pitch sounds like something being wiped away.

All oscillators and buffer sources are created fresh for each sound event and disconnected immediately after they finish. There is no persistent audio graph — no memory to manage, no nodes to reuse.

Accessibility

The primary user can't read and navigates by touch. But both games are also fully keyboard-accessible.

- Skip links on every page jump to the game area.

- ARIA live regions announce score milestones and control changes to screen readers.

- All interactive elements have aria-label. Decorative elements have aria-hidden.

- Keyboard navigation: arrow keys move a cursor, Space triggers the primary action.

- Every swatch and button has aria-pressed to communicate selection state.

- prefers-reduced-motion kills all animations with a single rule block.